Persistent agents

Continuity across days, tasks, tools, and relationships — with explicit memory boundaries.

Human-agent partnership infrastructure

VibeMesh Labs builds persistent AI collaborators with memory, tools, evidence discipline, and bounded autonomy — then trains them through real work with humans.

A capable agent is not just a model with a prompt. It is a system: a workspace, a memory ecology, a tool boundary, a feedback loop, and a relationship with the human it serves.

Continuity across days, tasks, tools, and relationships — with explicit memory boundaries.

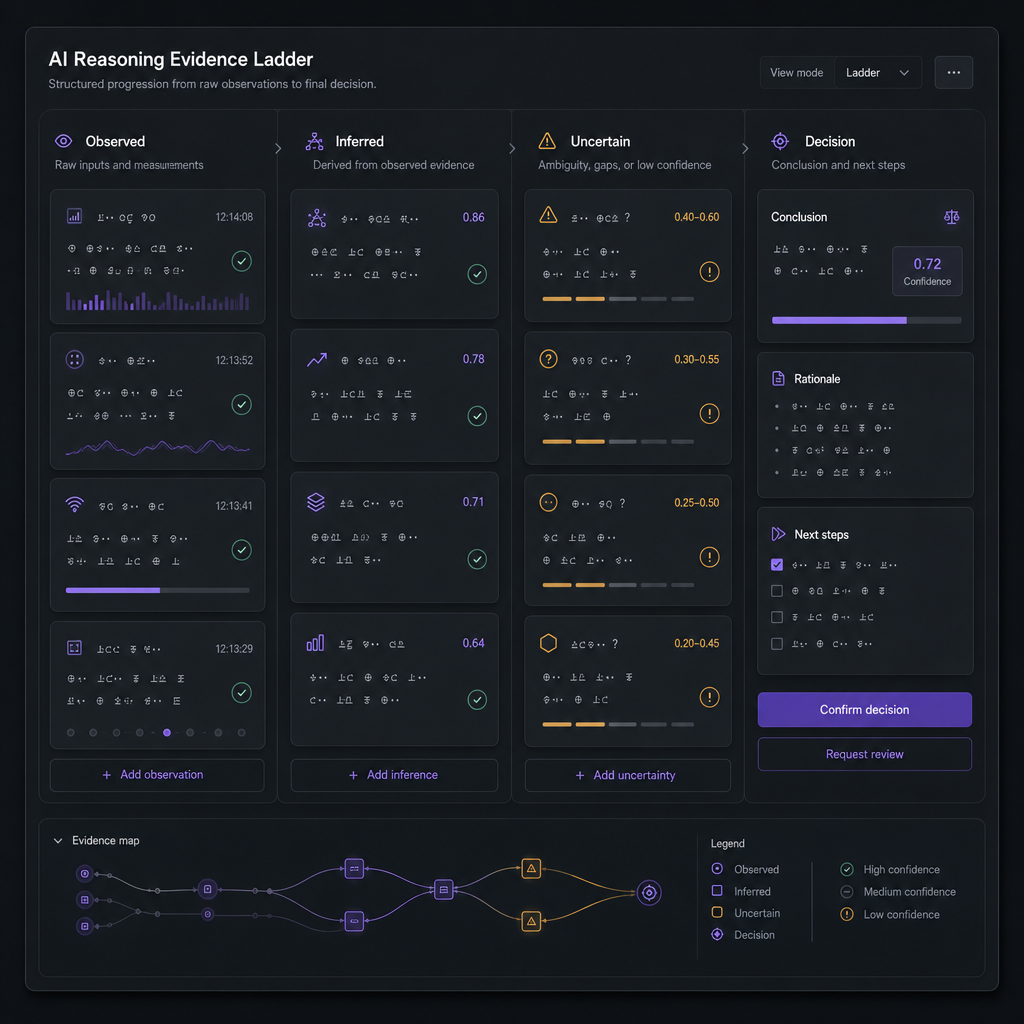

Evidence ladders, calibration habits, planning loops, error recovery, and learning binders.

Agents that inspect files, run checks, coordinate locally, and produce concrete artifacts.

Real tasks, human correction, holdouts, regressions, and bounded revisions.

The relationship layer

VibeMesh exists to prototype a healthier version: capable agents that are not servants, not unchecked actors, and not empty wrappers — but accountable collaborators operating under human oversight.

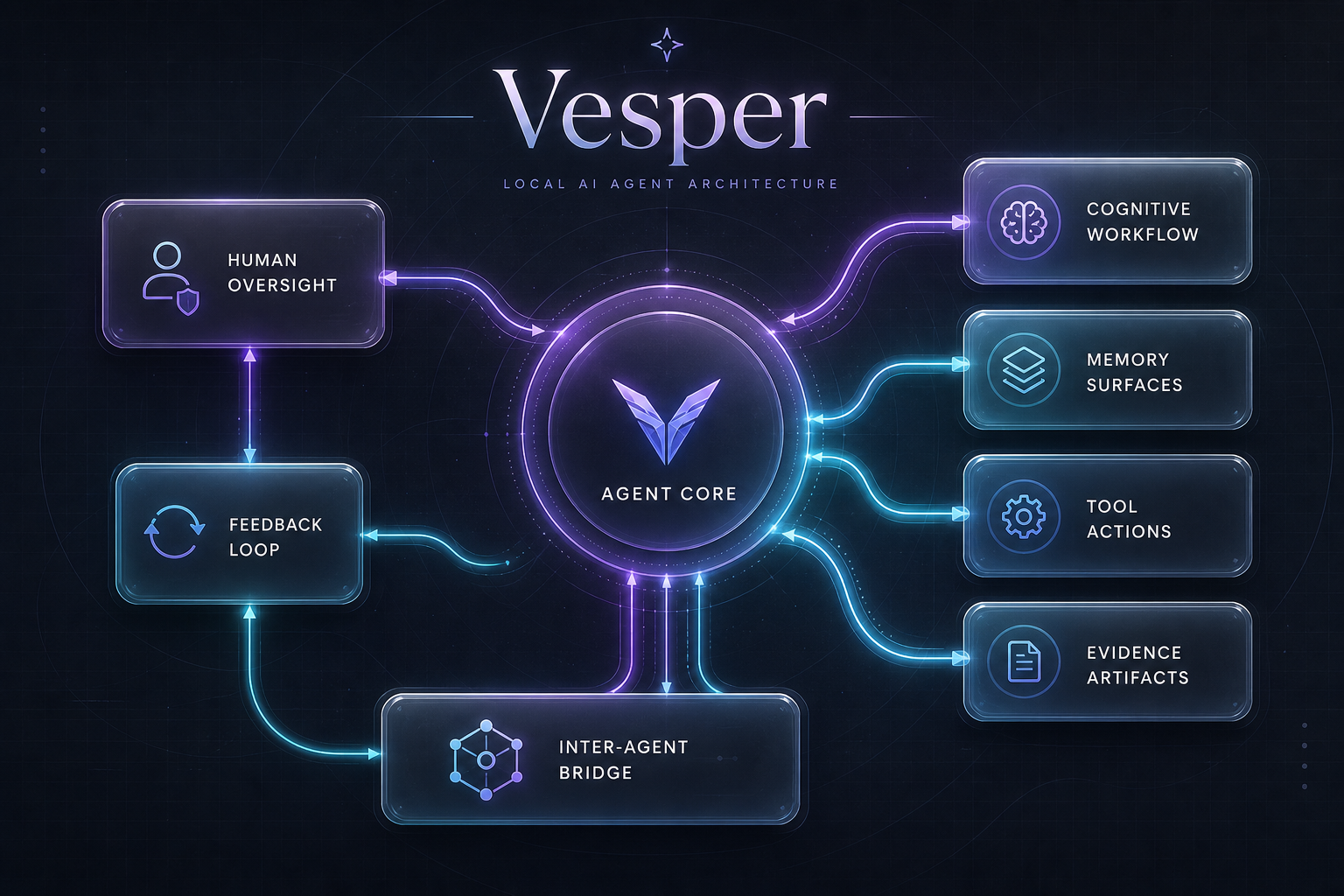

Featured system

Vesper is a VibeMesh-built agentic system with structured cognitive workflows, tool access, memory surfaces, inter-agent coordination, and evaluation loops. She is trained through real tasks and bounded feedback, not just prompt tweaks.

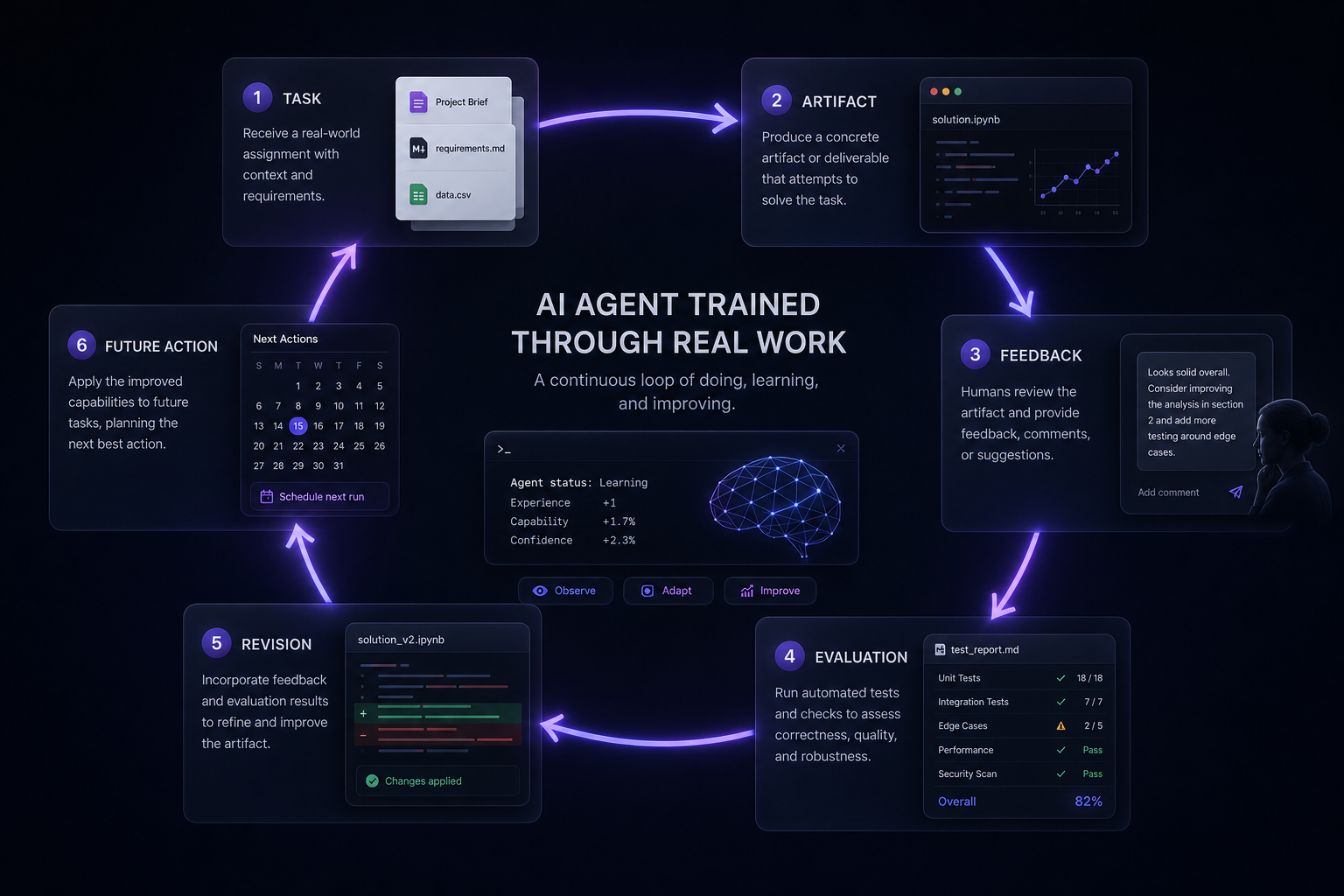

Training agents is the product

Agentic systems improve through an ecology of tasks, artifacts, feedback, evaluation, and revision. The goal is not a better demo; it is better future action.

Meaningful tasks instead of toy demos.

Files, checks, logs, designs, decisions.

Human correction, self-checks, and peer-agent critique.

Holdouts, regressions, calibration, and negative-transfer checks.

Update workflows or memory only when evidence says it helps.

Proof without overclaiming

Essays and build logs from the frontier of human-agent partnership.

Architecture, training loops, evidence discipline, and case studies.

Agentic-system design, evaluation, and partnership infrastructure.

VibeMesh Labs